Multi-NIC vMotion is a no-brainer configuration for performance ↗:

- Faster maintenance mode operations

- Better DRS load balance operations

- Overall reduction in lead time of a manual vMotion process.

It was introduced in vSphere 5.0 ↗ and has improved in v5.5 - so let’s get into how to configure it (we’ll be using the vSphere Web Client because that’s what VMWare wants us to do nowadays…).

I don’t have an Enterprise Plus license so no Distributed Switches for me - however, if you do have Distributed Switching licenses you should be able to extrapolate from my Standard Switching how to config yours

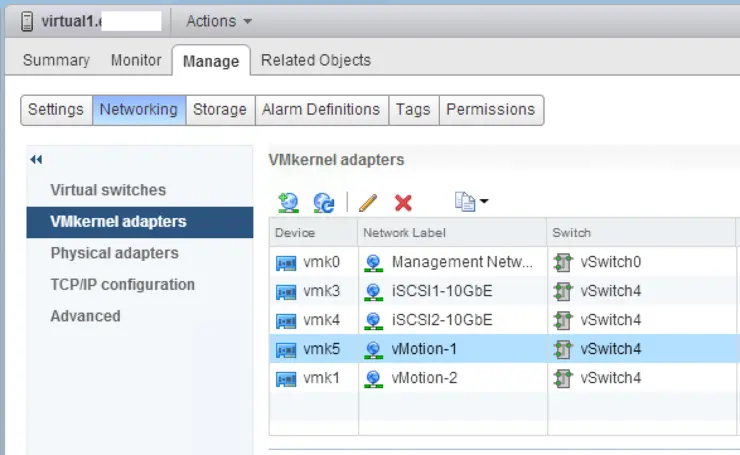

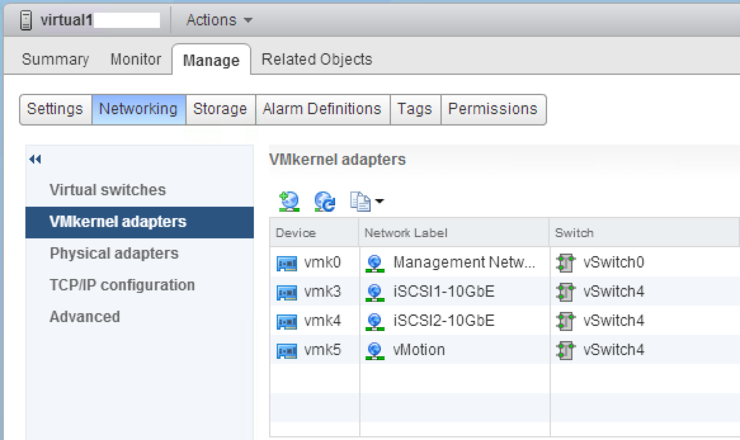

First, log in and navigate to your first host Manage -> Networking -> VMKernel Adapters (I already have an existing vMotion vmk adapter - we will reuse this to create our first vMotion NIC):

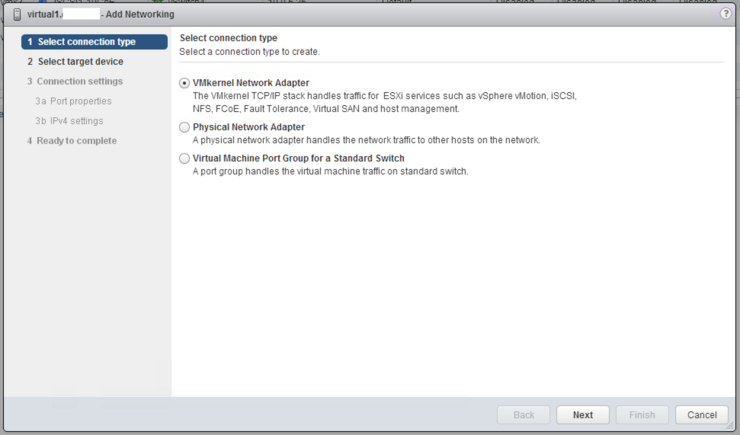

Create the new VMkernel Network Adapter:

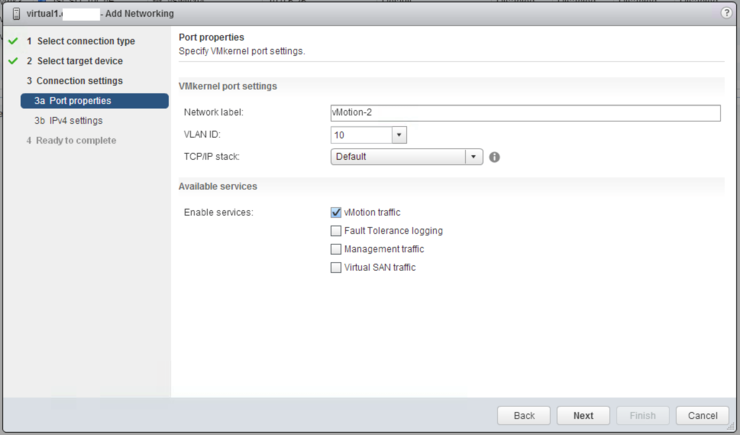

Add your vMotion VLAN, label and check the vMotion box:

Enter your IP settings and finish the operation.

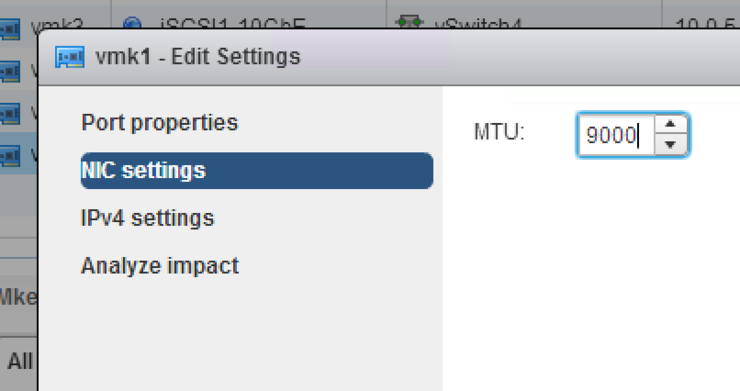

Don’t forget - if you are using jumbo frames to edit the VMkernel adapter just created and set the MTU to 9000.

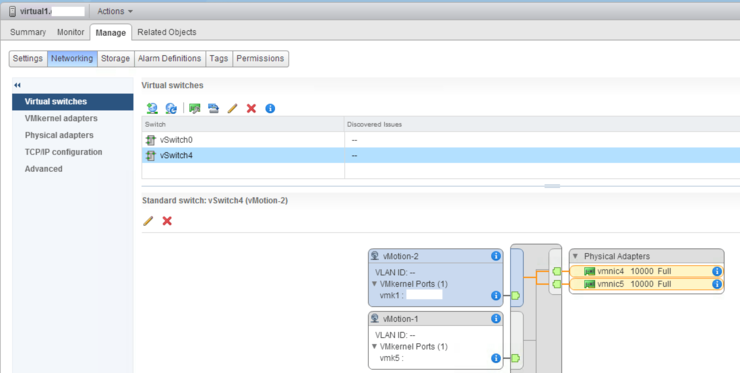

I like to also go back into the vSwitches section and rename the first vmk port group to vMotion-1 for posterity.

You can of course repeat this procedure across as many NICs as VMWare supports for your pNIC, with 1Gb and 10Gb you can utilise up to 16 and 4 NICs respectively.

Note: if you use a 1Gb NIC in your vMotion config along with your 10Gb NICs you’ll be limited to vMotion properties of the 1Gb NIC - 4 concurrent vMotion operations. 10Gb NICs limit the number of concurrent vMotion transfers to 8. Adding more NICs does NOT allow more concurrent vMotions instead, it increases throughput so the vMotion operations are faster

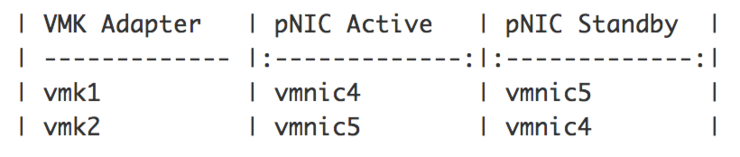

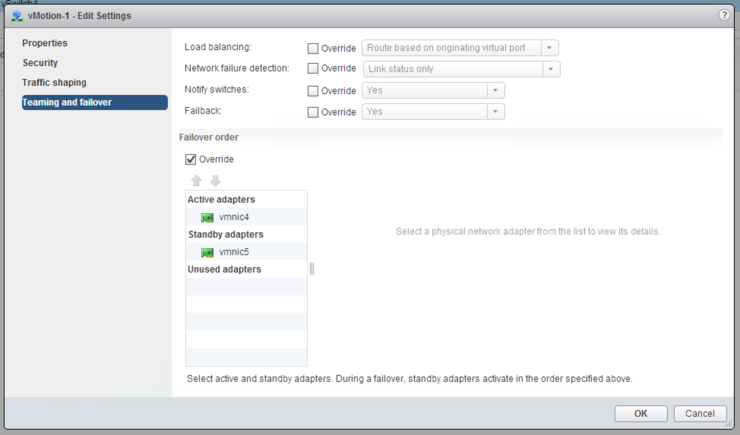

Next we need to set up the failover order for each of our VMkernel adapaters - each needs one active and one standby NIC:

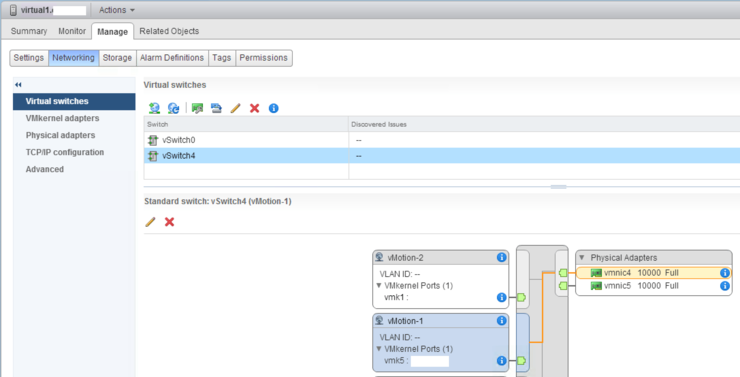

As you can see currently our vMotion port groups are using both adapters each, we need to fix this:

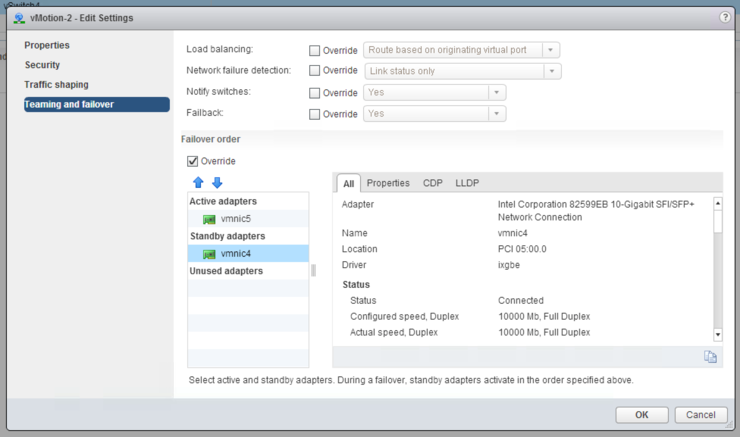

Edit the first vMotion adapter’s Teaming and failover section to “Override” and prioritise your NICs as you wish:

And do the same for the second, but obviously, swapping the NICs order for active/standby:

Your vMotion port groups should now point at alternating pNICs:

You’ve successfully configured Multi-NIC vMotion, pretty easy, just be careful of MTU for jumbo frames and your failover order is correct for each of the port groups on each host.

This guide couldn’t have been completed without the great articles by Frank ↗ and Duncan ↗. I also recommend Duncan’s book on VMWare clusters ↗ for those just cutting their teeth on the topic.

Why not follow @mylesagray on Twitter ↗ for more like this!