Recently had a problem were Veeam was giving bother on one VM that had a dedicated datastore, not allowing hot-add virtual appliance mode to work.

I originally thought it was a problem with CBT (changed block tracking) so I disabled that, with no luck, as it transpires there were a few (all datastore formatting related) problems:

- The

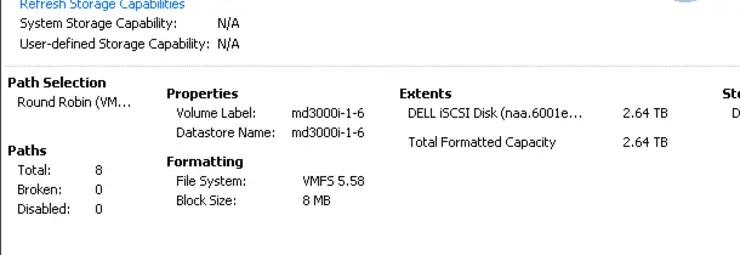

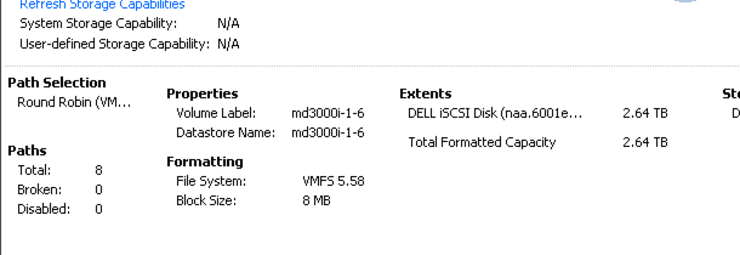

Veeam proxy’s datastore was formatted inVFMS-3with a 2MBblock sizeand upgraded toVMFS-5(retaining its 2MBblock sizeof course - otherwise a reformat would be needed). - The

source machine’s datastore was formatted inVMFS-3with an 8MBblock sizeand later upgraded toVMFS-5(retaining its 8MBblock size). - The target datastore was formatted in

VMFS-5natively with a unified 1MBblock size.

So when the proxy tries to hot-add the disk the VMFS block size on the source machine’s datastore is larger than the proxy’s datastore block size and the hot-add fails.

One solution was to put it in network mode but this can be slow and it’s not a nice way of doing things, so I wanted to run it in VA mode.

What I ended up doing was shutting down the source machine, migrating it to a VMFS-5 datastore, reformatting it’s original datastore to native VMFS-5 (native VMFS-5 volumes are all created with a unified 1MB block size) and migrating the source VM back to its original location.

The hot-add then worked as expected. In an ideal world one would reformat all their datastores to VMFS-5 ↗ with the standard 1MB block size and this is what I am working towards.

“What about the VMDK file size limit tied to block size?” I hear you say - well, as of VMFS-5 the 1MB block size now supports 2TB .vmdk files ↗:

The limits that apply to VMFS-5 datastores are:

The maximum virtual disk (VMDK) size is 2 TB minus 512 B. The maximum

virtual-mode RDM size is 2 TB minus 512 B. Physical-mode RDMs are

supported up to 64 TB.

As of VSphere 5.5 this will change to 64TB - though why you would want a .vmdk this size beats me - i’d have the disk split and clustered, if it was a Windows box e.g. SBS, Exchange or SQL - though, if you need this disk size you’re likely already using RDM for those.

Any input on this however is welcome.

Why not follow @mylesagray on Twitter ↗ for more like this!